Y our CIO has the business case approved. The transformation director has mapped the roadmap. The vendor presentations were impressive. But are you about to spend millions on AI platforms and build on a broken foundation?

Here's what most enterprises refuse to acknowledge: organisations will abandon 60% of AI projects through 2026 because their data isn't ready. The financial damage is staggering. 42% of companies abandoned most AI initiatives in 2025, up from just 17% in 2024.

This isn't a technology problem. It's a data preparation crisis that leadership refuses to see until they've already burnt through the budget.

60%

Project Abandonment Rate

42%

Initiative Failure in 2025

73%

Data Quality Barriers

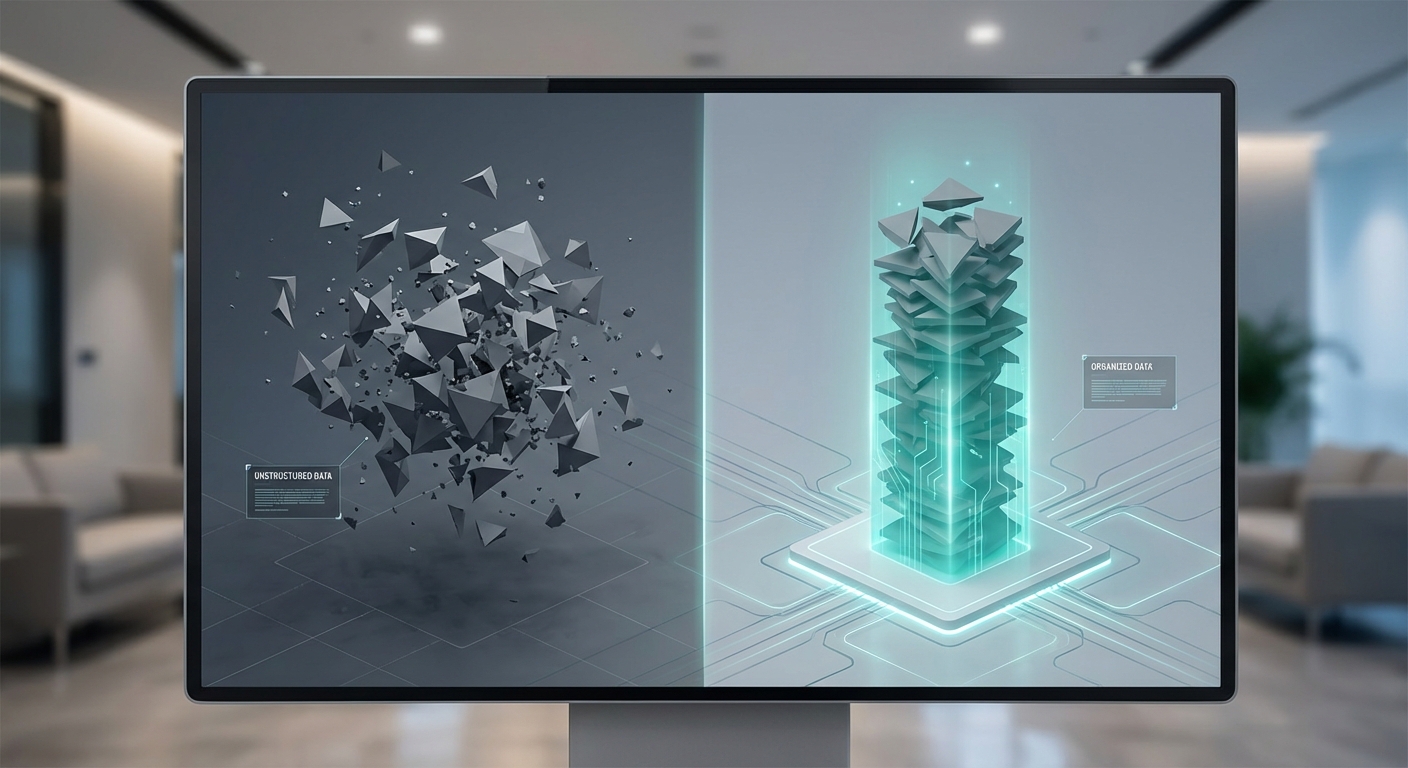

The Theatre of AI Readiness

Walk into any enterprise today and you'll hear the same story. They've built data lakes. They've implemented Microsoft Graph. They've centralised everything. They think they're AI-ready.

What they've actually done is create visibility of chaos rather than solving it. Data lakes have focused on collecting large volumes of data, but many have become complex platforms where data quality is hard to manage and useful datasets are difficult to find.

Microsoft Graph is brilliant for connecting data sources and showing you what you've got. But it exposes the real problem—most organisations don't actually know what data they have, where it lives, or how it all connects. You discover multiple versions of customer data across different systems, none of which agree with each other.

The tool shows you the mess. It doesn't clean it up for you. So you end up with a bigger sorting problem than you started with. You've dumped everything in one place, which sounds like progress, but you haven't done the hard work of understanding what's relevant, what's redundant, what's accurate, and what's an expensive distraction.

The Question No One Asks First

Before you clean a single dataset, before you build a data lake, before you implement governance frameworks—ask this: What data would actually be valuable?

Not what data do you have. Not what data you can collect. What would create value if you had it, regardless of whether it exists anywhere in your systems today. This is the question most organisations never ask. They start with their existing data and try to find AI use cases for it. They build from what they have rather than what they need. It's backwards.

Here's what happens when you invert this: you spend months cataloguing and cleaning data that turns out to be irrelevant to your AI objectives. You normalise customer records across six systems only to discover the AI use case needs transaction patterns, not contact details.

AI excels at digesting vast amounts of information and spotting patterns humans wouldn't. But giving it too much irrelevant data incurs massive unnecessary costs, creates instability, triggers hallucinations, and slows down what you're trying to achieve. This is a curation problem, not just a structure problem. Knowing what to give AI and what not to give it matters more than ensuring it's all in the right format.

But you can't curate effectively if you don't know what's valuable in the first place. So the value question comes first. What insights, predictions, or automations would move the needle for your business? What decisions could be better or faster with the right data? Then—and only then—you ask: does that data exist? If it does, is it good enough? If it doesn't, could you create or capture it more cheaply than excavating it from legacy systems?

Sometimes it's faster and cheaper to generate new, clean data than to spend months untangling decades of accumulated data debt. But here's what most organisations miss: it's not just about the tooling. The biggest data quality problems aren't technical—they're human. Engineers logging job times differently. Field service teams clocking on whilst still having breakfast at home. Sales teams using CRM fields as they see fit rather than as designed.

You can have perfect systems, brilliant AI tooling, and comprehensive governance frameworks. But if the people creating the data aren't capturing it consistently and accurately, you're building on sand. AI trained on faulty data accepts it as truth. An experienced human looking at individual records might recognise something's wrong—an engineer who apparently completed a three-hour job in 20 minutes, or logged into a site 50 miles away without travel time. AI sees patterns in the noise and draws logical conclusions from illogical inputs.

This is why knowing what data would be valuable matters so much. Once you know, you can assess not just whether the data exists and what state it's in—but whether the process that creates it is actually capable of producing reliable data. If it's not, no amount of cleaning legacy data will help. You need to fix how it's captured first.

That might mean redesigning processes. Simplifying data entry. Changing behaviours. Training people differently. Sometimes it means admitting that particular data will never be reliable if it depends on human input at the point of collection, and finding alternative sources or proxies instead.

This is unglamorous work. It's not about AI platforms or data lakes. It's about understanding how work actually happens and ensuring the data that matters gets captured in a way that reflects reality. Most companies skip this step. They assume the data in their systems reflects what actually happened. Then they wonder why AI delivers bizarre recommendations that make sense mathematically but not operationally. That's when the economics become clear.

Why Data Preparation Costs More Than Everything Else

Think about building a house. You don't write a cheque for £1 million to a main contractor before you've got planning permission, an environmental survey, and architectural designs. You write smaller cheques first. You prove the thing is possible before you commit the serious money.

Yet enterprises do the opposite with AI. They pick a solution early and lock in costs upfront, hitting peak commitment at the same time they're at peak uncertainty.

The data tells the story. Winning programmes earmark 50-70% of timeline and budget for data readiness—extraction, normalisation, governance, quality dashboards. But approximately 96% of businesses begin AI projects without sufficient high-quality training data, requiring unplanned investments of $10,000-$90,000.

Data preparation often takes 70% or more of total time and budget, yet it remains the most underestimated expense.

But here's what changes when you start with value: you're not preparing all your data. You're preparing the data that matters for the specific outcomes you're chasing. The organisation that spends months normalising customer records across six legacy systems without knowing why is wasting money. The organisation that identifies "we need customer lifetime value predictions" and then prepares only the transaction and interaction data required for that model is investing strategically. Same activity—data preparation. Completely different outcomes.

The Leadership Blind Spot

The Reality Gap

90% of data professionals at director or manager level believe company leaders aren't paying adequate attention to bad or inaccurate data. Non-management employees are more likely than executives to say major AI data quality issues remain.

The Readiness Illusion

81% of AI professionals say their company still has significant data quality issues, yet 85% believe leadership isn't addressing these issues. The disconnect is killing projects before they start.

But here's the deeper problem: even when leadership does pay attention to data quality, they're often focused on cleaning everything rather than asking what data actually matters. They authorise comprehensive data governance programmes without first identifying what outcomes those programmes should enable. It's the same mistake at a higher budget level.

Why Bad Data Becomes Catastrophic at Scale

Here where the value-first approach becomes critical for risk management. Once you know what data you need and you've assessed its quality, you can make an informed decision about how much autonomy to give your AI systems. One area of challenge is understanding what AI is capable of delivering and what it's sensible to try and get it to deliver. Just because it can doesn't mean it should.

The more you want AI to do, the more power, autonomy, and flexibility you need to give it. The trade-off is direct: benefits are linked to the level of risk involved. And that risk is directly proportional to your data quality.

AI systems inherit and amplify data quality issues. When data is inconsistent, incomplete, biased, or outdated, both models and the agents built on top of them are less accurate and prone to spreading issues at scale. This is why preparing the right data properly isn't optional it's the foundation for everything that comes after.

This creates what researchers call the "verification tax"—organisations spend time and money validating, correcting, or discarding outputs, wiping out promised efficiency gains. The risk escalates with autonomous systems. Errors in a chatbot conversation are immediately visible to users. Errors in autonomous agent actions can cascade through business processes before anyone notices.

An autonomous agent optimising engineer schedules based on faulty time-logging data might create a month's worth of impossible schedules before operations realises the problem. By then, you've damaged customer relationships, frustrated your workforce, and created operational chaos. The agent executed flawlessly. It just executed based on lies.

Now imagine you'd spent six months cleaning data that didn't matter for this use case, whilst the data you actually needed remained untouched. That's what happens when you don't start with value.

How This Actually Works

1. Define Value Outcomes

Start by asking what business outcomes would move the needle. Reduce time-to-decision? Predict failures? Optimise scheduling? OnBuy.com didn't ask "what AI can we use?" They identified £1 million monthly losses and then looked at the data.

2. Assess Data & Process Integrity

Once you know what's valuable, ask: Does the data exist? Is the process that creates it reliable? If engineers clock on while having breakfast, your AI will optimize around fiction. Fix the capture before you fix the data.

3. Strategize Collection

Could you capture this data fresh more cheaply than excavating it from legacy systems? Sometimes it's faster to generate new, clean data than to untangle decades of debt. Successful implementations achieve typical ROI of £3.70 per pound invested.

What Strategic Data Preparation Actually Costs

The financial impact of getting this wrong extends far beyond failed AI projects. Over a quarter of organisations estimate they lose more than $5 million annually due to poor data quality, with 7% reporting losses of $25 million or more. At a macro level, poor data quality costs the US economy approximately $3.1 trillion annually.

But those losses include the cost of cleaning everything rather than focusing on what matters. Yet AI spending continues to accelerate—forecasted to surpass $2 trillion in 2026, with 37% year-over-year growth. The cost of poor data quality scales proportionally.

Organisations that treat data as a product investing in master data management, governance frameworks, and data stewardship are seven times more likely to deploy generative AI at scale. But the ones winning aren't treating all data equally. They're prioritising data preparation based on value, not comprehensiveness.

The Pragmatic Path Forward

You don't need to solve every data problem before you start with AI. You need to identify what data would create value, assess whether it exists and in what state, evaluate whether the processes creating that data are reliable, then decide whether to prepare it, fix how it's captured, create it fresh, or source it differently.

Start with the value question. Then assess your data state for that specific use case. Prove readiness in one targeted area. Scale AI investment as certainty increases. This isn't about perfection. It's about knowing what data matters and ensuring that specific data is ready before you commit millions to platforms that can't deliver without it.

The enterprises winning with AI aren't the ones with the biggest budgets or the flashiest platforms. They're the ones who asked "what would be valuable?" before they asked "what data do we have?" They also asked "how is this data created?" before assuming it reflected reality. They identified specific outcomes. They prepared specific data. They proved value in tight, controlled environments. Then they scaled investment as confidence grew.

That's the Uncertainty Curve in action. Match your spending to certainty. Build momentum the right way. Swerve the big expensive mistakes. Because the alternative is watching 60% of your AI projects fail before they start, joining the 42% of companies who abandoned most initiatives last year, and explaining to the board why millions disappeared into a data swamp you didn't know you had.

Your choice.

Written by

Lyndon Docherty

A specialist in data strategy and AI transformation, Lyndon helps organizations navigate the complexity of readiness to deliver tangible business value.

In Collaboration With

David Beggs

David Beggs