I've spent 30 years watching enterprises buy technology they don't know how to use.

The pattern repeats. A CIO sees a demo. The vendor promises transformation. The contract gets signed. Then the hard bit starts.

AI agents are following the same script.

15%

of day-to-day work decisions will be made autonomously through agentic AI by 2028. (Gartner)

33%

of enterprise software applications will include agentic AI by the same timeframe.

The technology is arriving whether you're ready or not. But here's what most enterprises miss. This isn't about deploying smarter software. It's about redesigning how humans and machines work together. The question isn't whether AI agents will take jobs. It's whether you can build partnerships that actually work.

Why Agents Are Different

Traditional automation was predictable. You mapped a process. You automated the repetitive bits. Humans handled the exceptions.

AI agents don't work that way. They plan. They adapt. They make decisions across multiple systems. When you connect them to robotics platforms, they start controlling physical processes too.

This creates a new category of work. Not human. Not purely digital. Something in between. I call it the machine partnership problem. You can't just bolt agents onto existing workflows and hope for the best. You need to rethink who does what, when humans intervene, and how you measure success.

Most organisations aren't ready for this.

The Automation Bias Trap

There's a documented pattern called automation bias. People defer to algorithmic outputs even when they're wrong. It's not stupidity. It's human nature.

When you're pushed for time and the system gives you an answer, you trust it.

Now imagine that dynamic playing out across your enterprise. AI agents making thousands of decisions daily. Humans rubber-stamping them because they look plausible. The danger isn't that AI makes mistakes. It's when humans stop noticing.

You need governance structures that assume this will happen. Not if. When.

What Actually Works

I've seen this pattern work in practice. Not theory. Actual deployments.

Start with bounded autonomy. Give agents clear operational limits. Define escalation paths for high-stakes decisions. Build audit trails for everything.

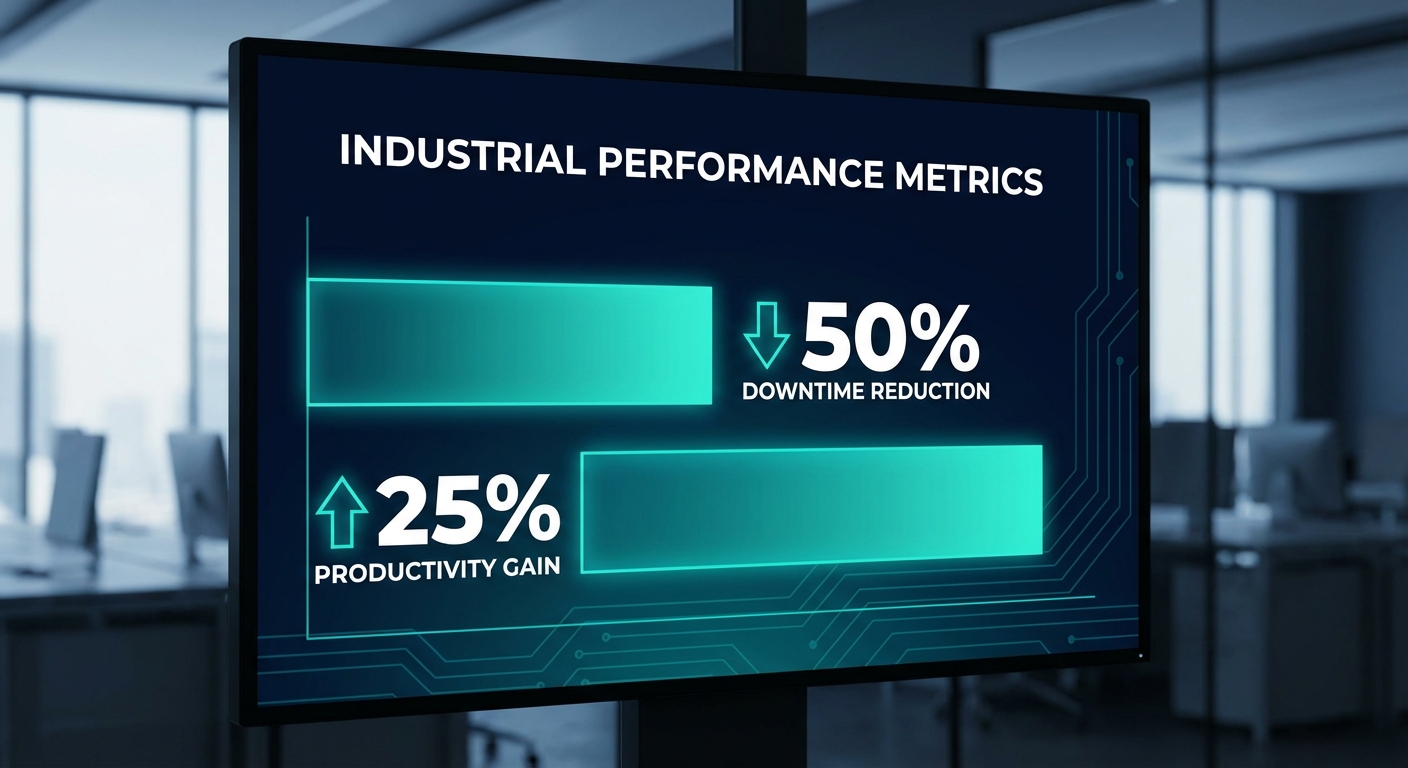

SAP's robotics initiative shows what's possible. Early proof-of-concept applications demonstrate up to 50% reductions in unplanned downtime, 25% improvement in productivity, and significant reductions in operational errors.

Those results came from treating robots and AI as partners, not replacements. Redesign roles, not tasks. Stop thinking about automating individual activities. Think about how humans and machines collaborate across entire workflows.

An operations analyst becomes a workflow orchestrator. A maintenance technician blends digital diagnostics with hands-on expertise. You're not eliminating jobs. You're evolving them.

Invest in AI literacy across the organisation. Not just technical teams. Everyone who touches these systems needs to understand how agents make decisions, where they fail, and when to override them. This isn't optional. It's foundational.

The Skills Question

Here's what nobody wants to say out loud. The skills gap is massive. AI investment across banking, insurance, capital markets and payments is expected to reach $97 billion by 2027. Only a fraction will go towards training the people expected to use these tools. That's backwards.

The technology works. The humans aren't ready.

You need people who can bridge human judgment and machine execution. That's not a skillset most organisations produce today. It's not taught in universities. It's not covered in traditional professional development.

The actuary profession now requires training on core machine learning and data science skills. The distinction between an actuary and a data scientist is disappearing. That pattern will repeat across industries. If you're not building this capability now, you're already behind.

The Governance Gap

Most enterprises have no idea who's responsible when an AI agent causes loss. Is it the system designer? The business owner? The executive sponsor? You need to answer that question before you scale deployment. Not after an incident.

Leading organisations are implementing what I call "bounded autonomy architectures." Clear operational limits. Escalation paths to humans for high-stakes decisions. Comprehensive audit trails of agent actions.

This isn't about acknowledging AI limitations. It's about designing systems that combine dynamic AI execution with deterministic guardrails and human judgment at key decision points. Governance can't be retrofitted. It has to be embedded from the start.

The objective isn't maximum autonomy. It's optimal orchestration.

The Real Competitive Advantage

Everyone will have access to the same AI technology. The models will commoditise. The platforms will standardise. Competitive advantage won't come from deploying advanced AI. It'll come from how effectively you choreograph the interaction between people, digital agents, and physical machines.

That's an organisational capability, not a technology purchase. It's built through learning, iteration, and disciplined execution. Fast followers can't replicate it by buying the same software.

I've seen this pattern play out with every major technology shift over three decades. Cloud computing. Mobile. Digital transformation. The technology was never the hard part. The people and processes were.

AI agents and robotics are no different. The question is whether you're building partnerships or just buying tools.

Written by

Lyndon Docherty

30-year veteran of enterprise transformation, specializing in the intersection of human capital and emerging technology orchestration.